|

4/25/2023 0 Comments Cosine similarity formula

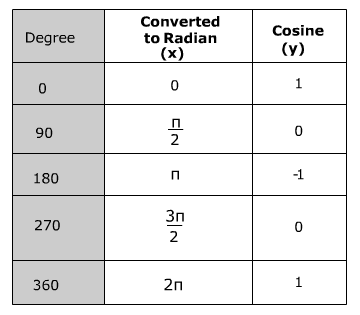

The smaller the angle is, the more similar the documents are. For example, if the word ‘fruit' appears 30 times in one document and 10 in the other, that is a clear difference in magnitude, but the documents can still be similar if we only consider the angle. This is advantageous because if two documents are far apart by the euclidean distance, the angle between them could still be small. It measures the similarity between documents regardless of the magnitude. Cosine similarity is commonly used in Natural Language Processing (NLP). The cosine similarity measures the angle between two vectors in a multi-dimensional space – with the idea that similar vectors point in a similar direction.

That might be a challenge for calculating distances between vectors with thousands of dimensions.įor that reason, we have different distance metrics that balance the speed and accuracy of calculating distances between vectors. Furthermore, some distance metrics are more compute-heavy than others. The time it takes to calculate the distance between two vectors grows based on the number of vector dimensions. Why are there different distance metrics? ĭepending on the Machine Learning model used, vectors can have ~100 dimensions or go into thousands of dimensions. ANN algorithms organize indexes so that the vectors that are closely related are stored next to each other.Ĭheck out this article to learn "Why is Vector Search are so Fast" and how vector databases work. Without getting too much into details, one of the big reasons why vector databases are so fast is because they use the Approximate Nearest Neighbor (ANN) algorithm to index data based on vectors. The best thing is, vector databases can query large datasets, containing tens or hundreds of millions of objects and still respond to queries in a tiny fraction of a second. The vector database then computes the similarity between the search query and the collection of data points in the vector space. To perform a search, your search query is converted to a vector - similar to your data vectors. The above image is a visual representation of a vector space. For example, the vector for bananas (both the text and the image) is located near apples and not cats. Each embedding is a point in a high-dimensional space. Vector databases keep the semantic meaning of your data by representing each object as a vector embedding. If you already have a working knowledge of Vector Search, then you can skip straight to the Cosine Distance section. In this article, we explore the variety of distance metrics ( Cosine, Dot product, Euclidean, Manhattan, and Hamming), the idea behind each, how they are calculated, and how they compare to each other. These metrics are used in machine learning for classification and clustering tasks, especially in semantic search.ĭistance Metrics convey how similar or dissimilar two vector embeddings are. Ultimately, we use the calculated distance to judge how close or far apart two vector embeddings are. The distance can take many shapes, it can be the geometric distance between two points, it could be an angle between the vectors, it could be a count of vector component differences, etc. In the context of a Vector Search, Distance Metric is a function that takes two vectors as input and calculates a distance value between the vectors. In order to judge how similar two objects are, we can compare their vector values, by using various Distance Metrics. Note, the meaning of each value in the array, depends on what Machine Learning model we use to generate them. For example, strawberries could have a vector – more likely the array would be a lot longer than that. In a nutshell, a vector embedding is an array of numbers, that is used to describe an object. The vector embeddings are stored together with the data in a database, and later are used to query the data. .jpg)

Vector databases - like Weaviate - use Machine Learning models to analyze data and calculate vector embeddings.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed